Naturalization of Text: Inserting Disfluencies

Alfianto Widodo, Bowman Brown, Ira Deshmukh, Parth Vipul Shah, Ryan Luu · University of Southern California

A probabilistic NLP system that augments clean text with corpus-derived disfluencies, bridging the gap between fluent written language and the paralinguistic irregularities of natural human speech.

Research Poster

Poster presented at USC Viterbi as part of my masters program

Introduction

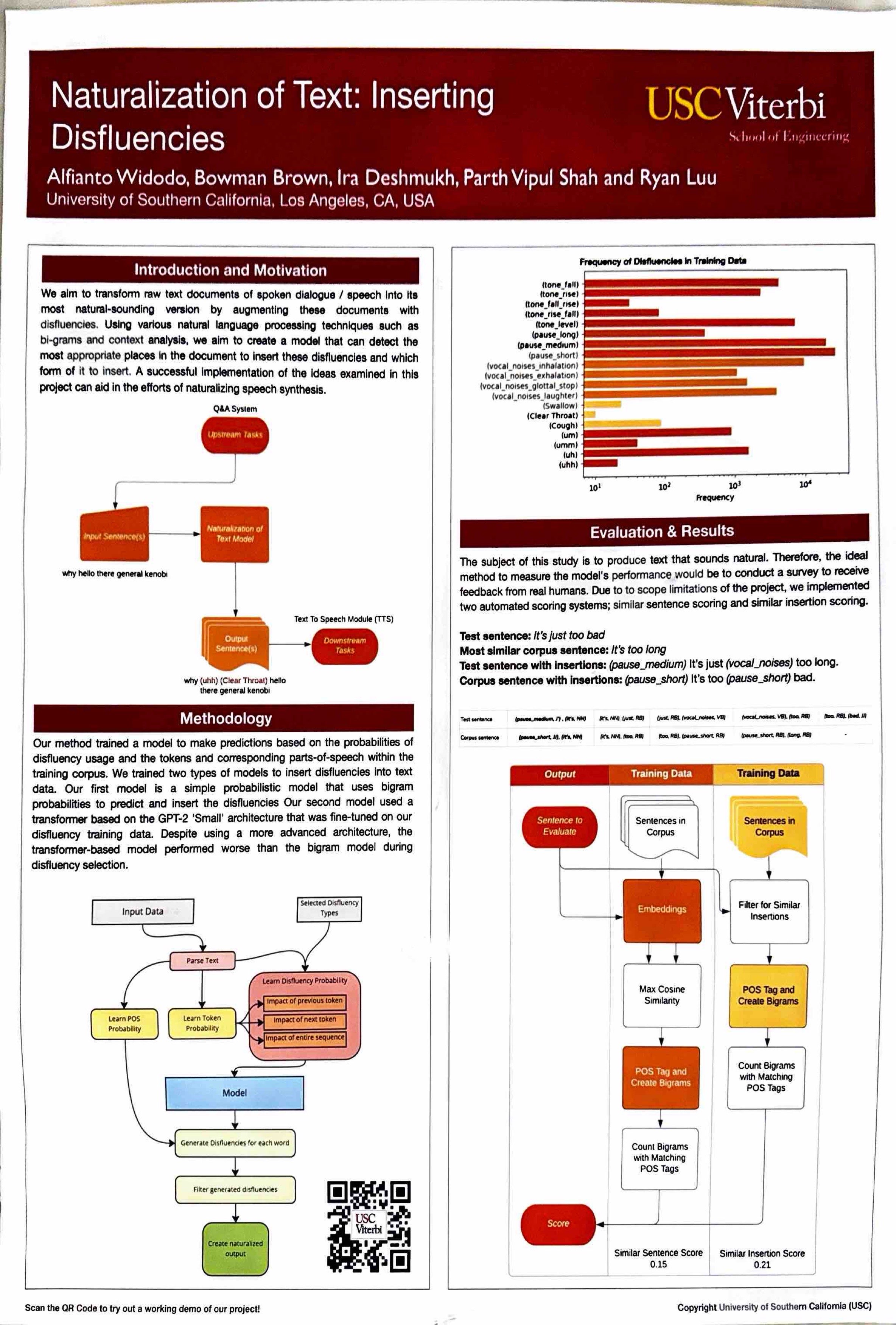

Back in 2022, AI-generated speech was at an interesting crossroads. GPT-2 had already shown the world that language models could produce surprisingly coherent text, and GPT-3 was quietly powering a handful of apps behind the scenes. But ChatGPT hadn't launched yet. Most people's exposure to AI voices was still Siri stumbling over your contact names or Alexa reading out a recipe with all the warmth of a tax document. The technology was improving fast, but something was still clearly off.

Part of what was off was the stuff we usually think of as mistakes. The “ums” and “uhs” when you're searching for a word. The brief pause before you commit to a point. The half-started sentence you reroute mid-thought. These are called disfluencies, and they're not imperfections — they're signals. They tell the listener you're thinking, that you care about saying the right thing, that there's a real person on the other end. Strip them out and speech starts to feel uncanny, a little too clean, a little too fast.

This project flips the usual NLP problem on its head. Instead of removing disfluencies from speech transcripts (which is what most research at the time focused on), we asked: can we put them back in? Given a clean, written sentence, can a model figure out where a real person would have hesitated, and insert the right kind of filler at the right moment? To do that, we trained on the Santa Barbara Corpus of Spoken American English, a collection of real transcribed conversations annotated down to every breath and pause.

Live Demo

Bigram Model · Client-SideTry a sample or type in your own text and hit transform:

Your naturalized text will appear here...

Technical Architecture

Model 1Bigram Language Model

How it learns

Example: what the table looks like

Training. The corpus is tokenized and augmented with [START] / [END] sentinal tokens. For each bigram (wᵢ, wᵢ₊₁) in which either element is a disfluency token, two probability tables are populated: all_bigrams stores the proportionalized co-occurrence frequency of every (word, disfluency) or (disfluency, word) pair; pos_bigrams stores the proportionalized frequency keyed by the Penn Treebank POS tag of the content word and the disfluency label, capturing positional tendencies by syntactic category.

How it inserts — example: “I think we should go”

① Label each word's grammatical role

② Score each position — how often does that word type precede a disfluency in real speech?

③ Pick the top-scoring spots, look up the most likely disfluency for each, and insert

Inference: position selection (pos_selection_next). Each token position x is assigned a probability weight equal to Σ pos_bigrams[POS(xᵢ)], the sum of all disfluency probabilities associated with that POS tag in training data. The [START] token receives a downscaled weight of Σ / 10 to penalize sentence-initial insertion. A weighted random sample without replacement then selects ⌊modifier × |tokens|⌋ positions to modify.

Inference: disfluency selection. For each selected position idx, candidate disfluencies are gathered from idx_by_first[tokens[idx]] and idx_by_second[tokens[idx+1]] and merged into a single candidate set. Frequencies are re-normalized and a single disfluency is drawn by weighted sampling. Insertions are applied in reverse index order to preserve positional integrity, and sentinel tokens are stripped from the final output.

Model 2GPT-2 Fine-tuned Transformer(discarded from evaluation)

Reading one word at a time

I

skipI think

insert (um)I think (um) we

skipI think (um) we should

insert (pause_short)I think (um) we should (pause_short) go

doneUnlike the bigram model, the transformer considers the entire sentence so far before deciding — not just the two words on either side.

The transformer model was built on HuggingFace GPT-2 Small (117M parameters), motivated by the hypothesis that full-sentence context would improve disfluency placement over a local bigram window. Custom tokens for each disfluency type were injected into the GPT2TokenizerFast vocabulary, and the model embedding matrix was resized accordingly. Training used the AdamW optimizer for 100 epochs with learning rate 0.005 and batch size 16.

During inference the model operates autoregressively: at each step it receives the partially-built output and predicts a distribution over the next token. The logits are masked to include only valid disfluency tokens; if a disfluency is sampled it is inserted, otherwise the next ground-truth word from the input sentence is appended. This constrained decoding guarantees that the output is the original sentence plus disfluencies with no other modifications. Despite the architectural advantage, the transformer underperformed the bigram model in evaluation and was excluded from reported results.

Evaluation & Results

Human evaluation was infeasible at project scope, so two automated scoring metrics were designed to measure disfluency quality without surveys:

Similar Sentence Score (SSS). For each output sentence, the entire training corpus is encoded with sBERT (all-MiniLM-L6-v2) embeddings and the most semantically similar corpus sentence is retrieved via max cosine similarity. Both the output and the retrieved corpus sentence are POS-tagged with NLTK and split into bigrams. A match is counted when a bigram pair shares a disfluency at the same position and the adjacent content-word POS tags agree. Two modes: hard-matching requires exact disfluency type agreement; soft-matching requires only that both disfluencies belong to the same class (e.g., any pause matches any pause).

Similar Insertion Score (SIS). Rather than semantic sentence matching, SIS directly scans the output for insertions and searches the entire corpus for bigrams with matching disfluency type and adjacent POS tags. Each positional and tag match contributes +1 to the score, normalized over sentence count. A gold-standard SIS was computed on manually transcribed interview data to benchmark the metric itself — the numerical proximity of the dataset SIS (0.25) to the gold SIS (0.406) validates the scoring approach.

| Scoring Method | Score |

|---|---|

| Similar Sentence Score (hard-matching) | 0.156 |

| Similar Sentence Score (soft-matching) | 0.215 |

| Similar Insertion Score (dataset) | 0.250 |

| Similar Insertion Score (gold standard) | 0.406 |

The bigram model's SIS dataset score of 0.250 compared to the gold standard of 0.406 indicates the model captures real disfluency patterns but applies them somewhat less contextually than transcribed human speech, which is consistent with the limited 13KB training corpus from a single source.

If you are interested in reading the full paper, feel free to read it here.

The Team

Alfianto Widodo · Bowman Brown · Ira Deshmukh · Parth Vipul Shah · Ryan Luu